Actor-Critic

Simplest

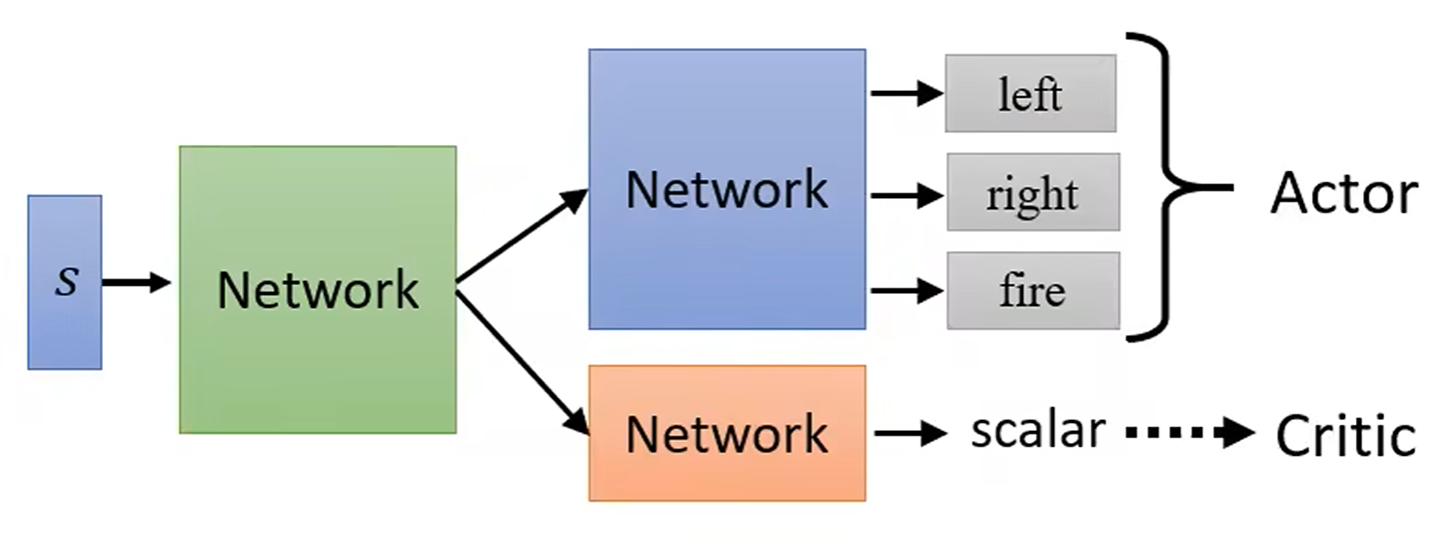

Actor-Critic 框架的基本思想:

- Actor: policy update, 策略

- Critic: policy evaluation, 值函数

Advantage

Advantage actor-critic (A2C), 多步 TD 误差作为优势估计:

Importance Sampling

重要性采样比率 (Importance Sampling Ratio):

Deterministic

DDPG (Deep Deterministic Policy Gradient) 算法:

- Actor: 确定性策略

- Critic: Q 函数

- Target networks

- Experience replay

Ornstein-Uhlenbeck噪声探索